The Night Everything Broke

It was 2 AM. My phone wouldn't stop buzzing.

The web app was down. Not slow — completely down. The single EC2 instance I'd been running had died, and since there was nothing behind it, no load balancer, no backup, nothing — that was it. Every user hitting a blank screen.

I spent the next two hours manually bringing it back up, sweating through what should have been a 10-minute fix. The real problem wasn't the outage itself. It was that I had built something fragile on purpose, just because it was easier at the time.

That night I decided to stop cutting corners on availability. This post is what I learned.

Why This Actually Matters

I want to be real about something: a lot of Terraform tutorials treat high availability like an advanced topic you'll get to "later." I don't think that's right.

The moment you put something in production — even a side project, even something with 10 users — you're making an implicit promise that it'll be there when people need it. A single EC2 instance can't keep that promise. AWS itself will retire instances, AZs have outages, hardware fails. It's not a question of if, it's when.

More practically: if you're learning Terraform to work in the industry, this is the architecture you'll be expected to know. Every mid-to-large engineering team runs their services behind a load balancer with auto scaling. Walking into a new job and seeing a naked EC2 instance in production is a red flag — and being the person who knows how to fix it is valuable.

The ASG + ALB pattern I'll walk through here isn't fancy. It's just the baseline done properly.

The Inherited Mess

Here's roughly what I was working with before that bad night:

resource "aws_instance" "web" {

ami = "ami-0c55b159cbfafe1f0"

instance_type = "t2.micro"

user_data = <<-EOF

#!/bin/bash

echo "Hello, World" > index.html

nohup busybox httpd -f -p 8080 &

EOF

}

One server. Hardcoded everything. No way to reuse this for staging without editing the file. No resilience whatsoever.

The first thing I needed to fix wasn't even the availability — it was the fact that the config itself was rigid. Hardcoded values meant I couldn't deploy the same setup to different environments without making manual changes every time, which is exactly how mistakes happen.

Input Variables: Stop Hardcoding Everything

The fix is input variables. Instead of baking values directly into your resources, you declare them separately and reference them:

variable "server_port" {

description = "The port the server will use for HTTP requests"

type = number

default = 8080

}

variable "instance_type" {

description = "EC2 instance type"

type = string

default = "t2.micro"

}

variable "environment" {

description = "dev, staging, or prod"

type = string

default = "dev"

}

Now deploying to prod with a bigger instance is just:

terraform apply -var="instance_type=t3.small" -var="environment=prod"

No file editing, no copy-pasting configs, no "wait which version did I deploy again." The same code runs everywhere, and the differences live in the variables.

Local Variables: Don't Repeat Yourself

Once I had variables, I noticed I was writing the same name patterns and tags everywhere. Six resources, six places to update if the name changed. Locals clean this up:

locals {

service_name = "web-app"

environment = var.environment

common_tags = {

Environment = local.environment

Service = local.service_name

ManagedBy = "Terraform"

}

name_prefix = "${local.service_name}-${local.environment}"

}

Now every resource just uses local.name_prefix and local.common_tags. Change the environment variable, everything updates. It's a small thing but it makes configs a lot easier to maintain as they grow.

Making the Web Server Configurable

With variables and locals wired up, here's the updated server setup:

resource "aws_security_group" "instance" {

name = "${local.name_prefix}-instance-sg"

ingress {

from_port = var.server_port

to_port = var.server_port

protocol = "tcp"

cidr_blocks = ["0.0.0.0/0"]

}

tags = local.common_tags

}

data "aws_ami" "amazon_linux" {

most_recent = true

owners = ["amazon"]

filter {

name = "name"

values = ["amzn2-ami-hvm-*-x86_64-gp2"]

}

}

resource "aws_launch_template" "web" {

image_id = data.aws_ami.amazon_linux.id

instance_type = var.instance_type

vpc_security_group_ids = [aws_security_group.instance.id]

user_data = base64encode(<<-EOF

#!/bin/bash

mkdir -p /var/www/html

echo "Hello from ${var.environment}" > /var/www/html/index.html

cd /var/www/html && nohup python3 -m http.server ${var.server_port} &

EOF

)

lifecycle {

create_before_destroy = true

}

}

A few things worth noting here. I'm using aws_launch_template rather than aws_launch_configuration — the older resource is deprecated and AWS recommends launch templates for all new setups. The user_data needs to be wrapped in base64encode() with launch templates; the old resource handled that encoding internally so it's easy to miss.

I also hit a 503 the first time around because I was trying to start the server with busybox httpd, which isn't installed on Amazon Linux 2. Switched to python3 -m http.server which is already on the AMI — no installation needed, no 503.

For the AMI, I'm using a data block to always fetch the latest Amazon Linux 2 AMI dynamically. Hardcoding an AMI ID will eventually break as AWS deregisters old images — learned that one after a failed terraform apply.

The Auto Scaling Group: Finally, Some Resilience

This is the piece that would have saved my 2 AM. An Auto Scaling Group manages a pool of EC2 instances and automatically replaces any that go unhealthy:

data "aws_availability_zones" "available" {

state = "available"

}

resource "aws_autoscaling_group" "web" {

min_size = 2

max_size = 5

desired_capacity = 2

launch_template {

id = aws_launch_template.web.id

version = "$Latest"

}

vpc_zone_identifier = data.aws_subnets.default.ids

target_group_arns = [aws_lb_target_group.web.arn]

health_check_type = "ELB"

tag {

key = "Name"

value = "${local.name_prefix}-web"

propagate_at_launch = true

}

}

min_size = 2 means there are always at least two instances running, spread across different Availability Zones. If one AZ has a problem, the other keeps going. desired_capacity = 2 tells the ASG exactly how many instances to start with — without it, some versions of the provider will default to 0 and you'll have a load balancer with nothing behind it. health_check_type = "ELB" means the ASG trusts the load balancer's health checks to decide when an instance needs replacing.

The Load Balancer: One Stable Entry Point

The ASG gives you multiple instances. The Application Load Balancer gives users a single address that routes traffic across all of them.

First, I need to pull in the default VPC and its subnets — Terraform doesn't know about existing AWS resources unless you explicitly fetch them with a data block:

data "aws_vpc" "default" {

default = true

}

data "aws_subnets" "default" {

filter {

name = "vpc-id"

values = [data.aws_vpc.default.id]

}

}

Then the load balancer resources:

# The ALB needs its own security group — separate from the instances.

# This one opens port 80 to the internet and allows all outbound traffic.

resource "aws_security_group" "alb" {

name = "${local.name_prefix}-alb-sg"

ingress {

from_port = 80

to_port = 80

protocol = "tcp"

cidr_blocks = ["0.0.0.0/0"]

}

egress {

from_port = 0

to_port = 0

protocol = "-1"

cidr_blocks = ["0.0.0.0/0"]

}

tags = local.common_tags

}

# The ALB itself — spans all default subnets so it can reach instances in any AZ.

resource "aws_lb" "web" {

name = "${local.name_prefix}-alb"

load_balancer_type = "application"

subnets = data.aws_subnets.default.ids

security_groups = [aws_security_group.alb.id]

tags = local.common_tags

}

# The target group is what the ALB routes traffic to.

# It also defines the health check — if an instance stops returning 200 on /,

# the ALB marks it unhealthy and the ASG replaces it.

resource "aws_lb_target_group" "web" {

name = "${local.name_prefix}-tg"

port = var.server_port

protocol = "HTTP"

vpc_id = data.aws_vpc.default.id

health_check {

path = "/"

protocol = "HTTP"

matcher = "200"

interval = 15

timeout = 3

healthy_threshold = 2

unhealthy_threshold = 2

}

}

# The listener is the rule that says: anything hitting port 80 on the ALB

# gets forwarded to the target group.

resource "aws_lb_listener" "http" {

load_balancer_arn = aws_lb.web.arn

port = 80

protocol = "HTTP"

default_action {

type = "forward"

target_group_arn = aws_lb_target_group.web.arn

}

}

# Print the DNS name after apply so I can test immediately without

# digging through the AWS console.

output "alb_dns_name" {

value = aws_lb.web.dns_name

description = "The DNS name of the load balancer"

}

After terraform apply, I grab the output DNS name and test it with curl or just past it on your browser:

http://web-app-dev-alb-70346136.us-east-1.elb.amazonaws.com

# Hello from dev

Then I manually terminated one of the EC2 instances from the AWS console just to see what would happen. Within about 45 seconds, the ASG had launched a replacement. The load balancer kept routing to the healthy instance the whole time. No downtime.

That's the moment it clicked for me.

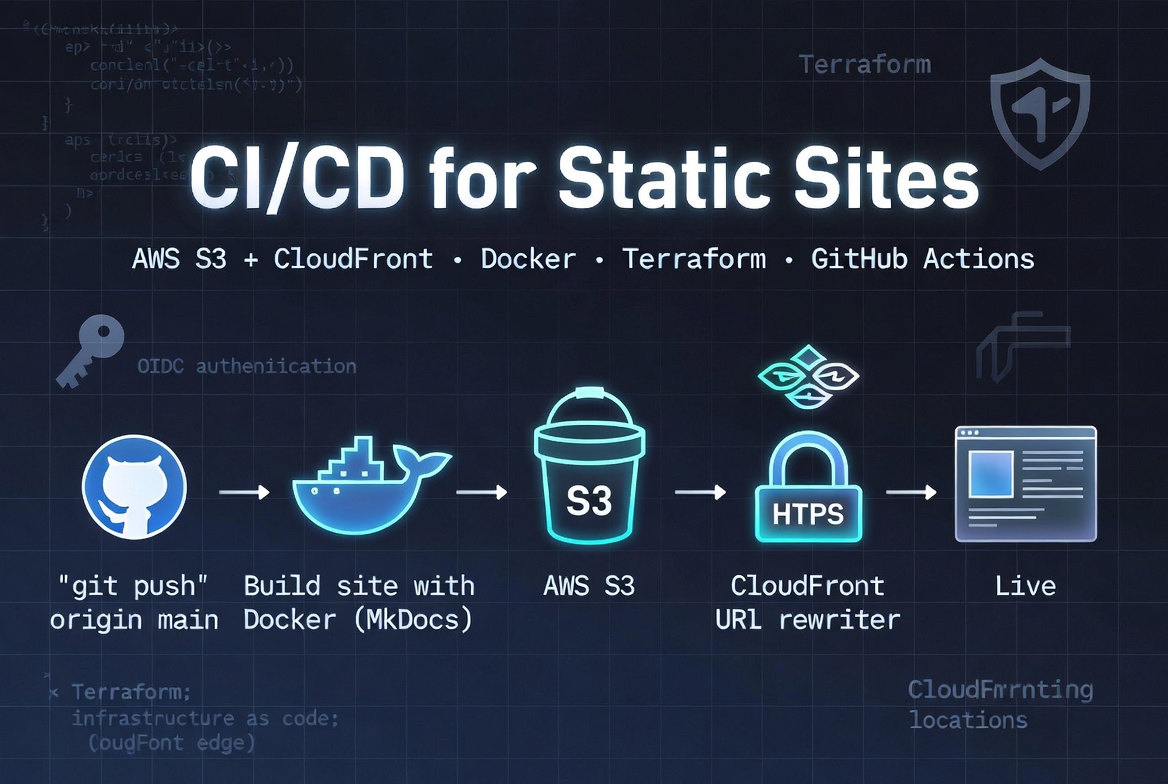

Architecture Diagram

Resource Map after deployment

Different Environments, Same Code

The last piece is managing environments. I keep a separate .tfvars file for each:

# dev.tfvars

instance_type = "t2.micro"

environment = "dev"

server_port = 8080

# prod.tfvars

instance_type = "t3.small"

environment = "prod"

server_port = 8080

terraform apply -var-file="dev.tfvars"

terraform apply -var-file="prod.tfvars"

Same Terraform code, different configs. No copy-pasting, no drift between environments.

Quick Reference

| Thing | What it does |

|---|---|

variable |

Parameterizes your config so the same code works across environments |

locals |

Computed values you reuse inside a module — tags, name prefixes, etc. |

aws_launch_template |

The template the ASG uses to spin up new instances (replaces the deprecated aws_launch_configuration) |

aws_autoscaling_group |

Manages the instance pool, replaces unhealthy instances automatically |

health_check_type = "ELB" |

Tells the ASG to trust the load balancer's health checks |

aws_lb |

The Application Load Balancer sitting in front of everything |

create_before_destroy |

Prevents a gap where the ASG references a deleted launch config |

Wrapping Up

I went from one fragile server to a setup that survives AZ failures, instance crashes, and traffic spikes — and the Terraform code for all of it is maybe 120 lines.

The 2 AM incident was frustrating at the time, but it pushed me to actually understand how resilient infrastructure is built rather than just reading about it. If your current setup is a single EC2 instance, I hope this gives you a clear path to something better.

Next up: Terraform state management and remote backends — because terraform.tfstate sitting on your laptop is the next thing that'll cause a bad night.

This post is part of a 30-day Terraform learning journey.

💬 Comments

No comments yet. Be the first to share your thoughts!

Leave a Comment